How Large Language Models Actually Work, in Plain English

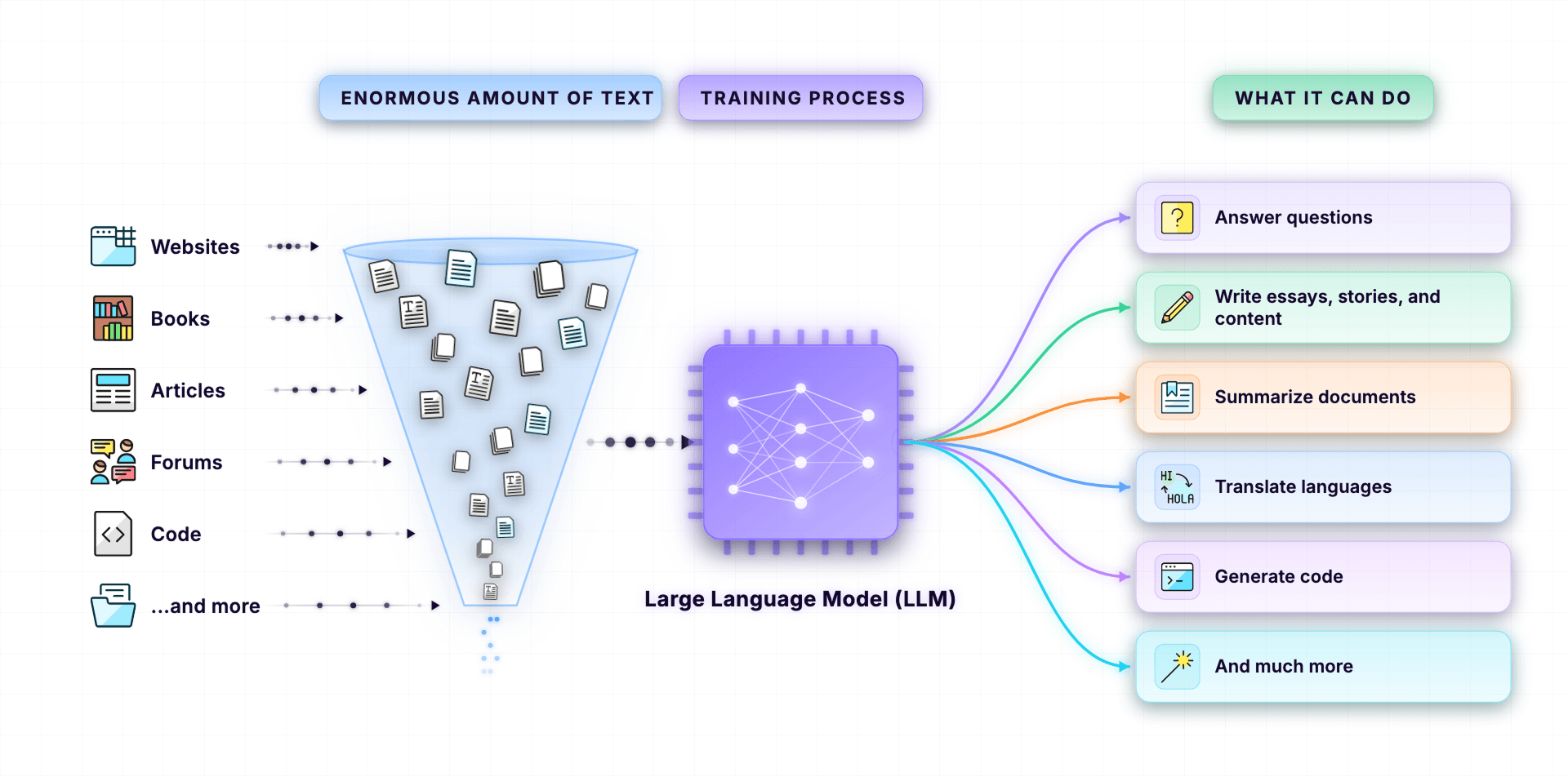

A Large Language Model (LLM) is a computer program that is trained on an enormous amount of text so that it can understand and generate human language.

So, what is a Large Language Model (LLM)?

A Large Language Model (LLM) is a computer program that is trained on an enormous amount of text.

And from all that training, it learned how to understand human language and reply in human language.

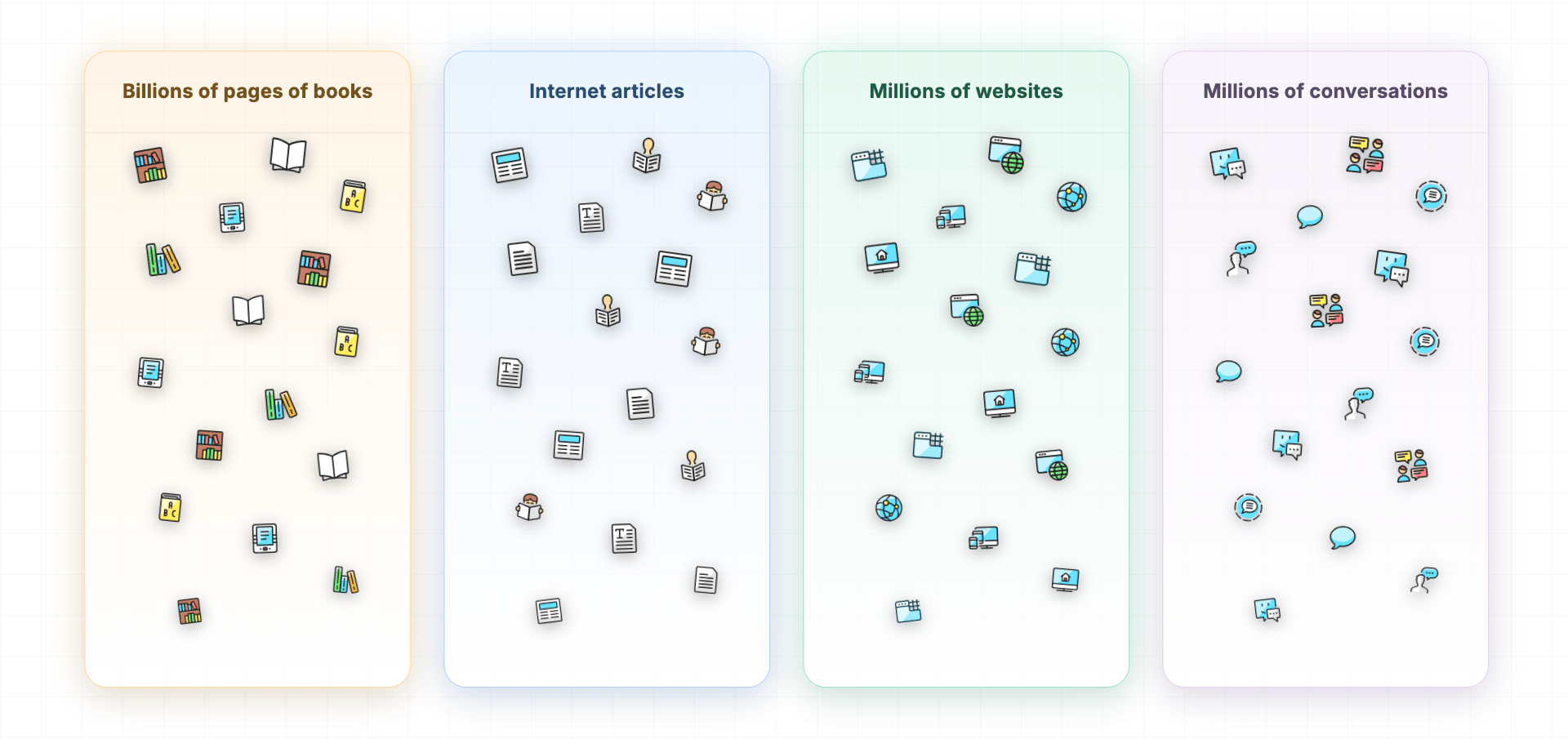

And when I say enormous amounts of text, I mean:

- Billions of pages of books

- Internet articles

- Millions of websites

- Millions of conversations

Because of this training, LLMs can now recognize patterns in the human language, and this helps LLMs:

- To understand human language

- And generate a response in human language

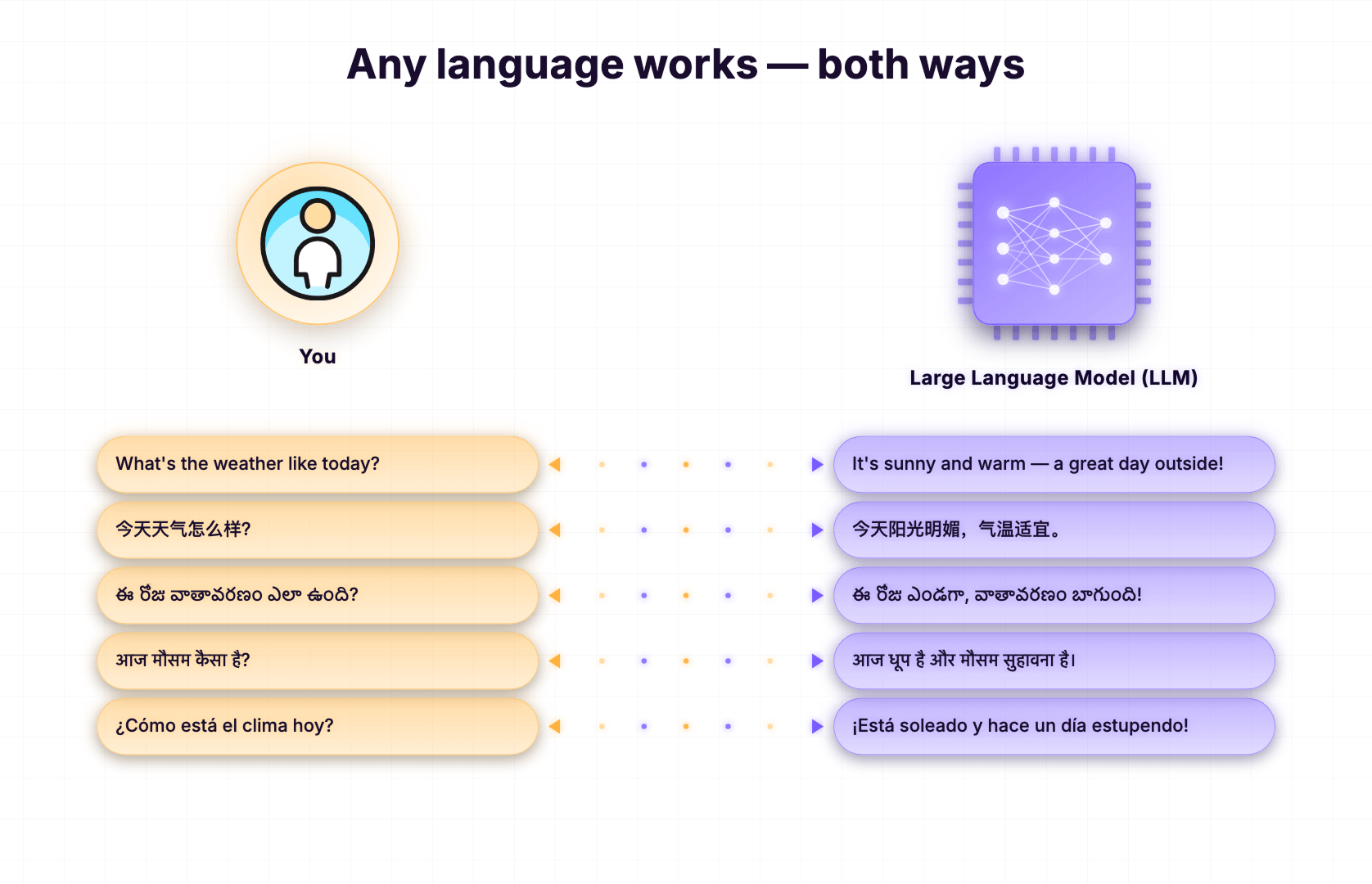

In other words, you can talk to an LLM in your own language, and it talks back in the same language.

And that too in an intelligent and meaningful way.

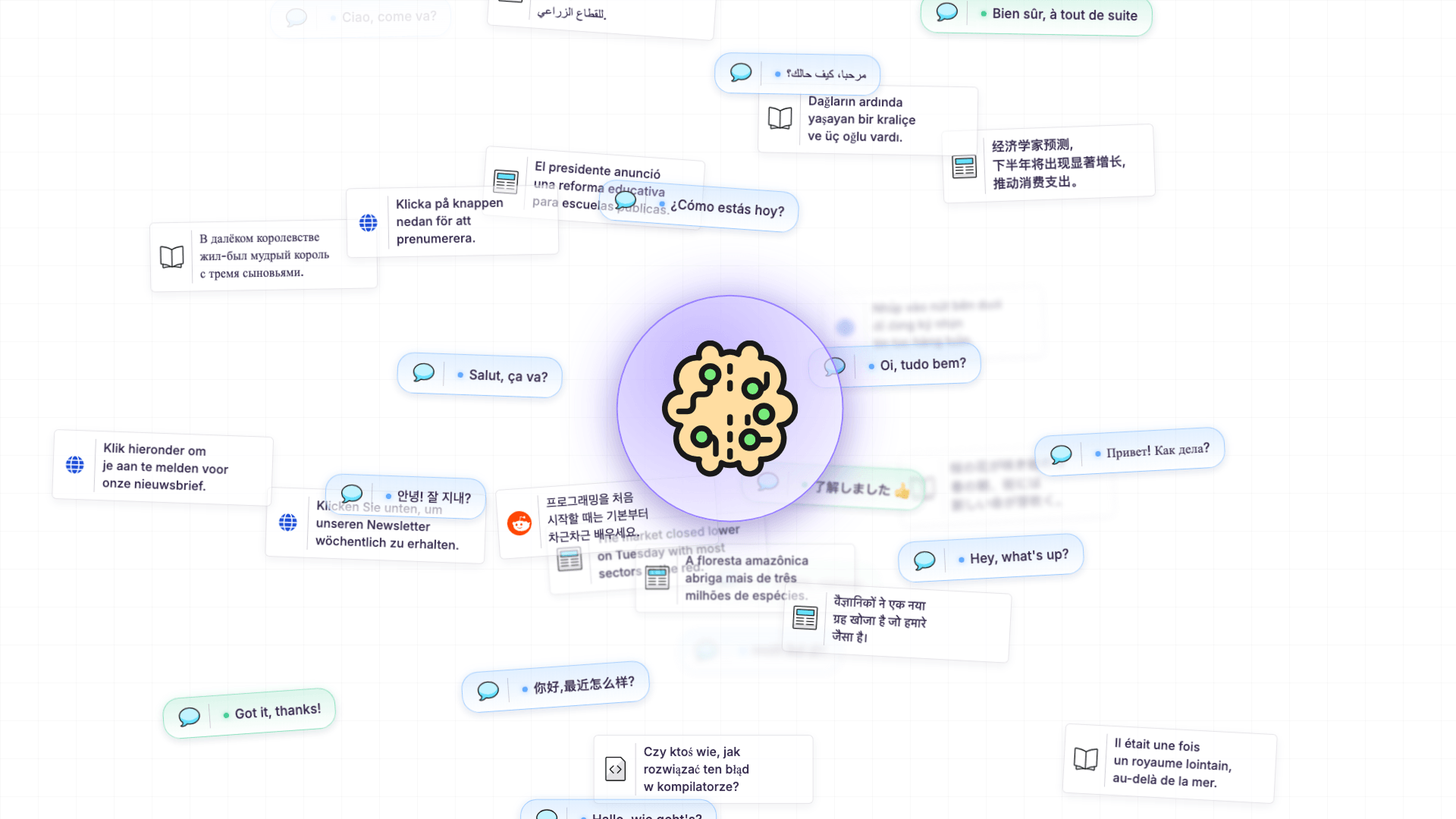

And by saying "your own language," I don't mean just English or Chinese.

Text was fed in every known language: English, Hindi, Telugu, Tamil, Bengali, Spanish, Mandarin, Arabic, French, Japanese, German...the list goes on and on.

You name it.

This is why you can chat with ChatGPT in your own native language, and it replies back in the same language.

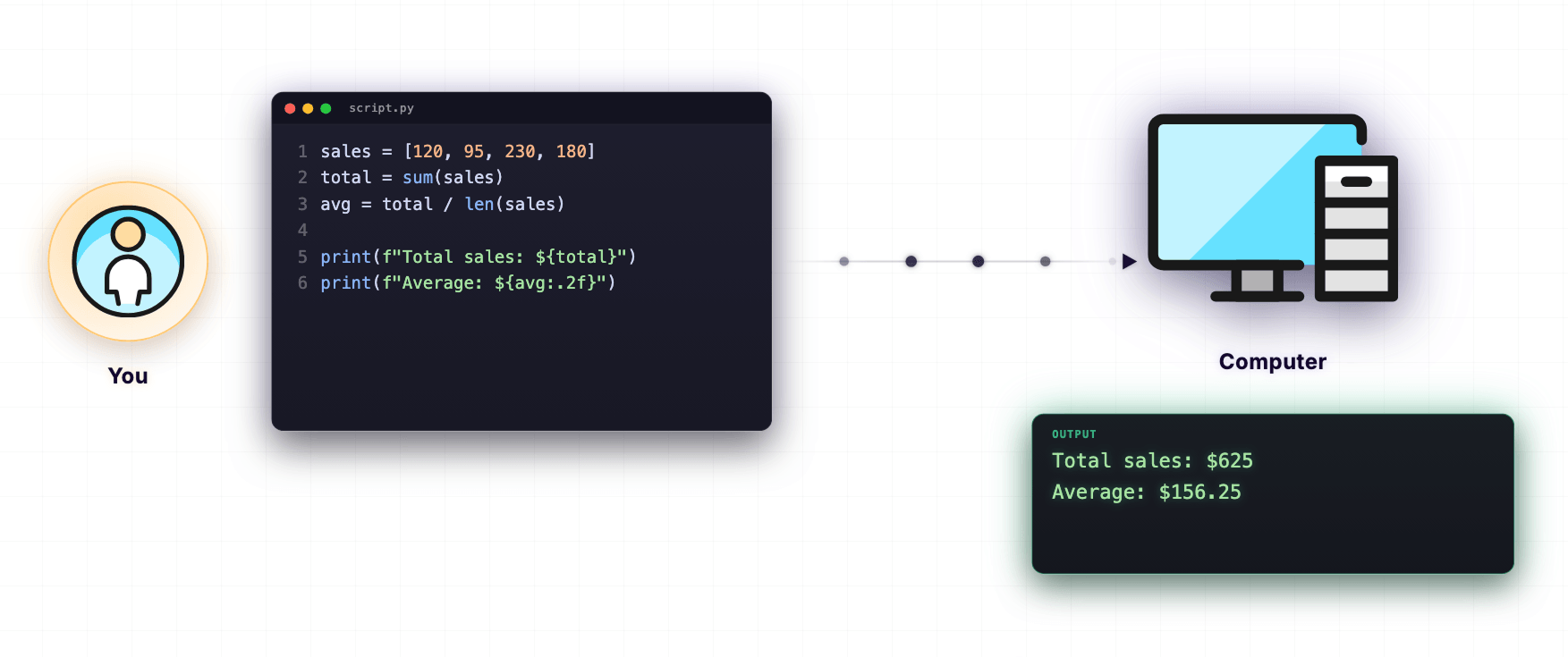

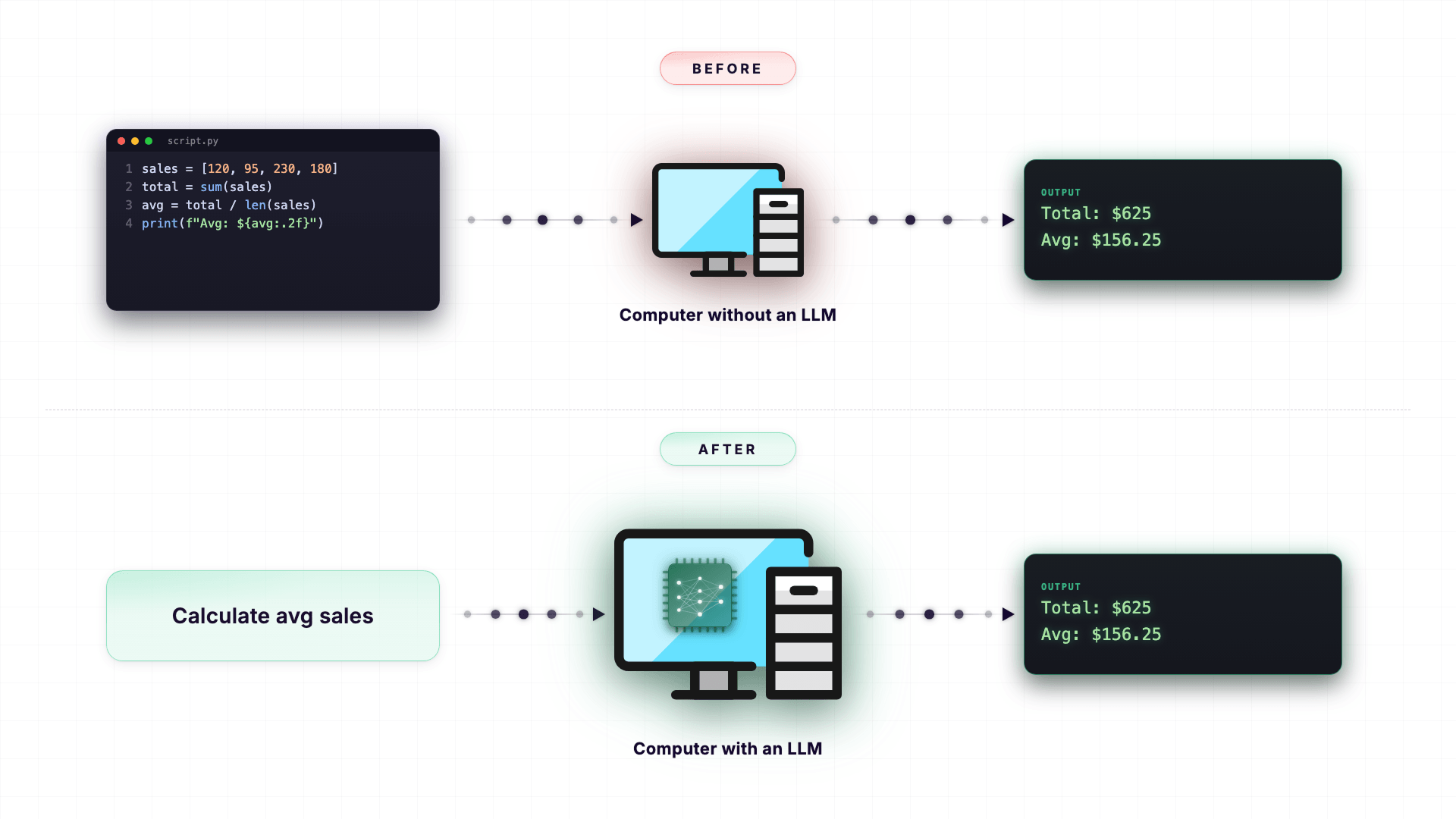

And honestly, this is a huge deal because, for decades, the only way to really make a computer do something useful was to write code in a programming language like Python, JavaScript, or Java.

If you wanted a computer to do anything beyond simple commands, you had to 'talk' to it in a programming language. Not in human language.

But the introduction of LLMs changed this completely.

Now, you can just type in plain English (or any other human language), and the LLM understands exactly what you mean.

No code.

No special syntax.

Just normal, everyday conversation.

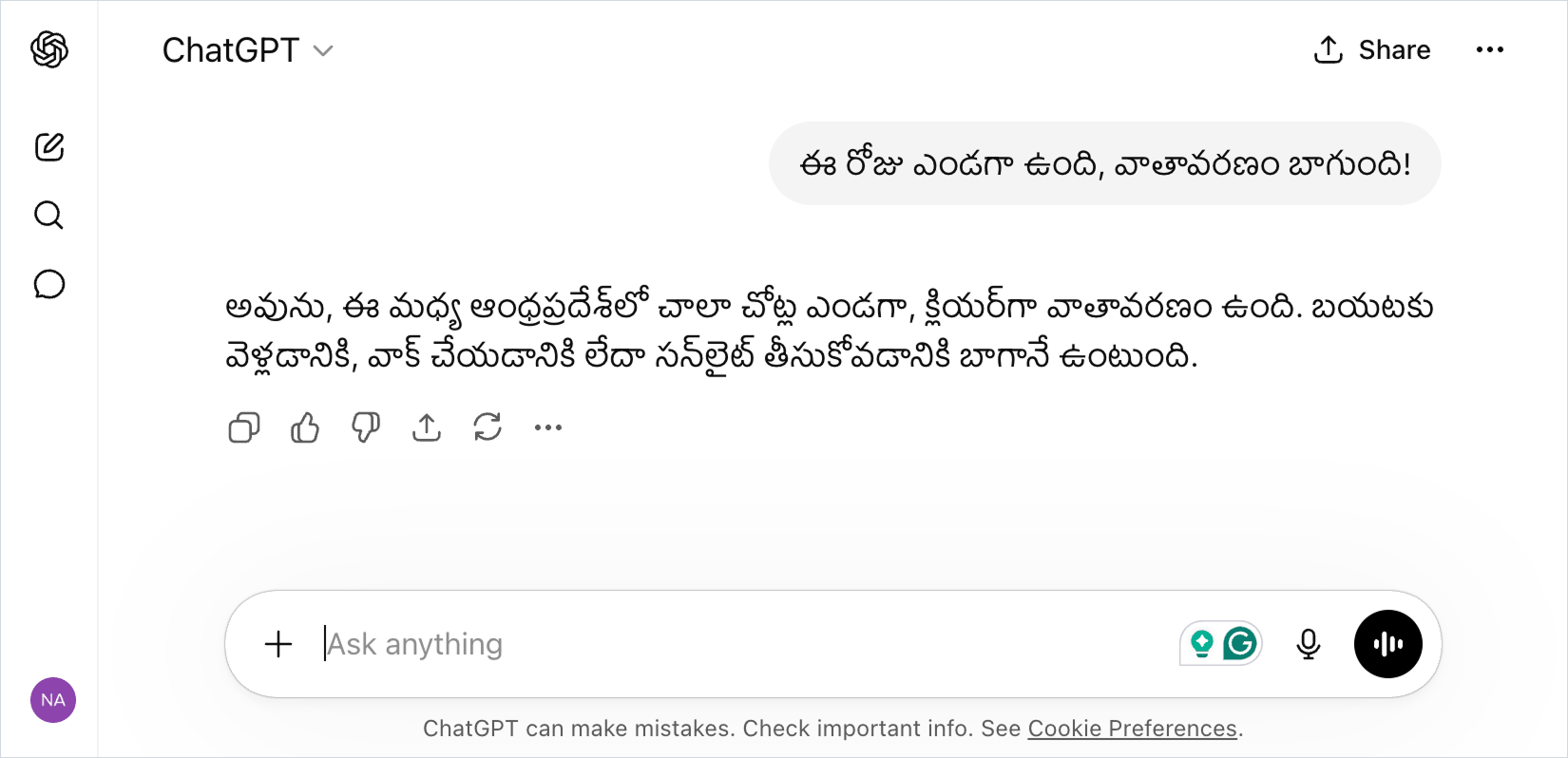

And the most famous example of an LLM in action is ChatGPT.

I bet you have used ChatGPT.

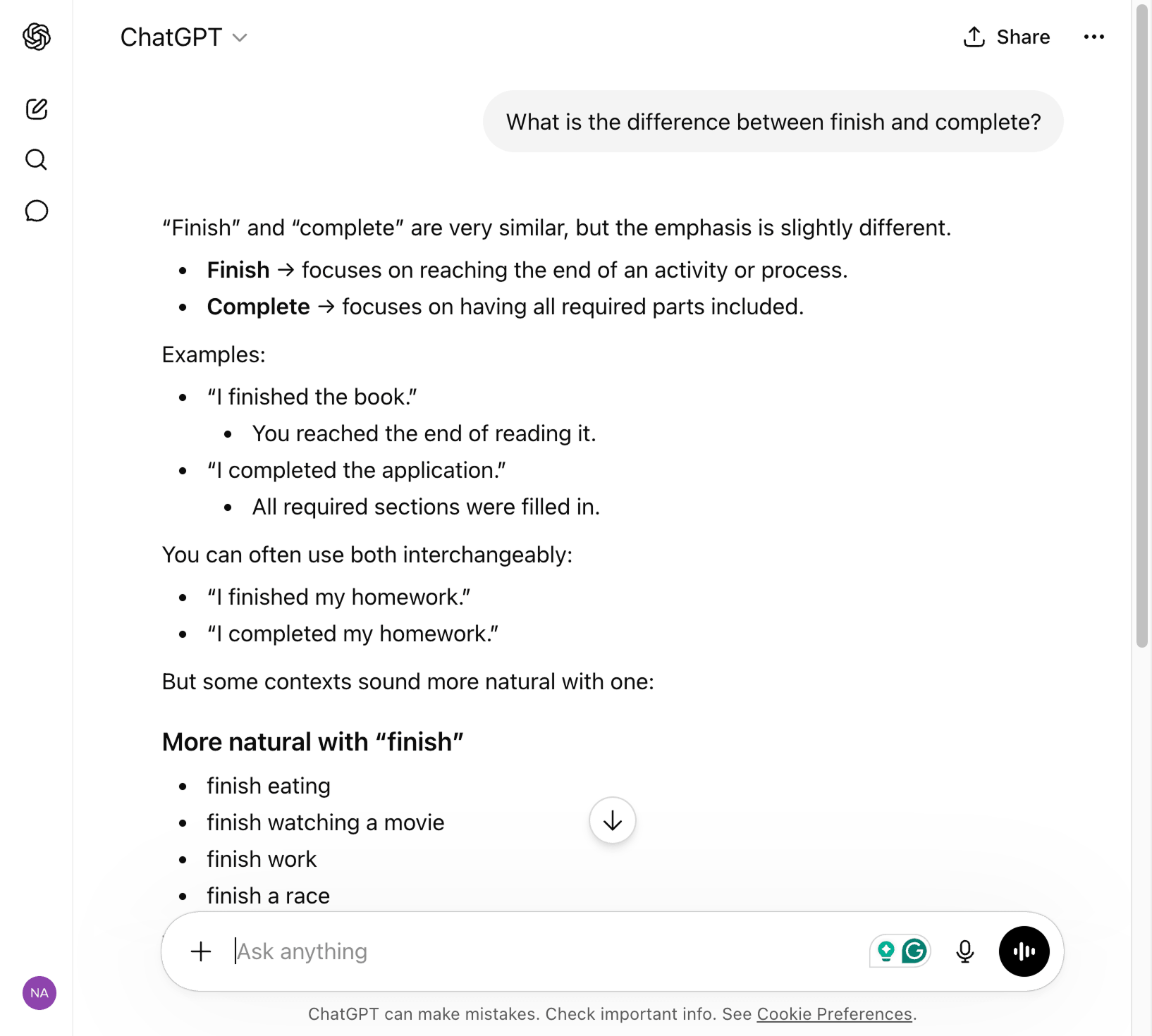

As you already know, the usage of ChatGPT is simple:

- First, you open up a new chat

- Then, you ask something in plain English, for example, "What is the difference between finish and complete?"

- Finally, ChatGPT understands your request and replies in plain English, explaining the difference.

That is an LLM in action.

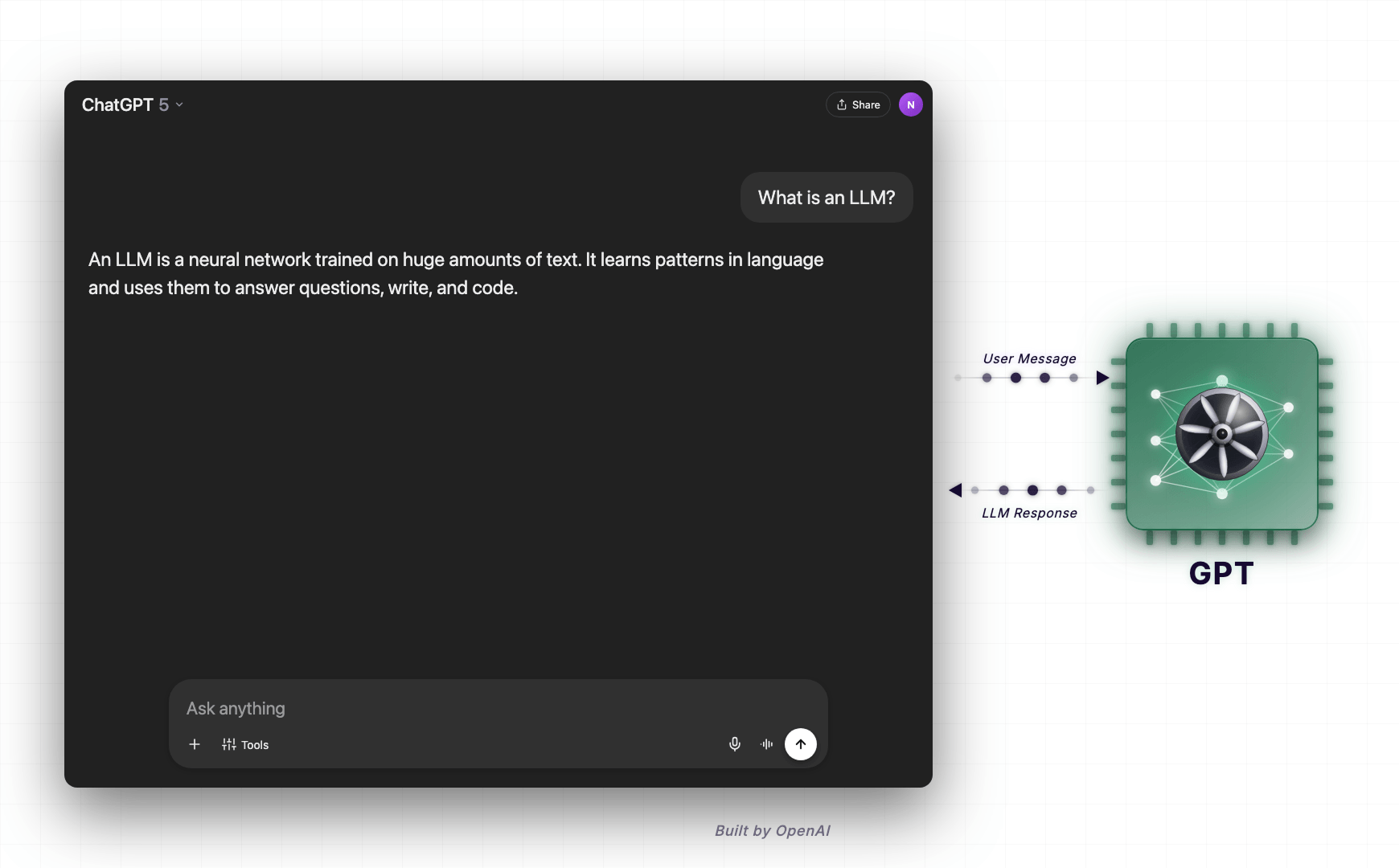

Also, just to be on the same page, ChatGPT is not an LLM.

It is a UI for interacting with an LLM called GPT (Generative Pre-trained Transformer).

OpenAI first developed GPT, and to make it accessible for everyone, they built ChatGPT as a chat UI on top of it.

These days, when I am chatting with ChatGPT, I genuinely feel like I am talking with a Human.

When I speak with Claude Opus (another LLM), I feel like I am getting scolded by a short-tempered mentor.

"Hahaha! Things are definitely getting out of hand."

Yeah, I can see that too.

Anyway, let's dive a bit deep now.

Let's understand the meaning behind the words "Large", "Language", and "Model" because these words say what an LLM truly means.

Let's start with the word "Model".

What does the word "Model" mean?

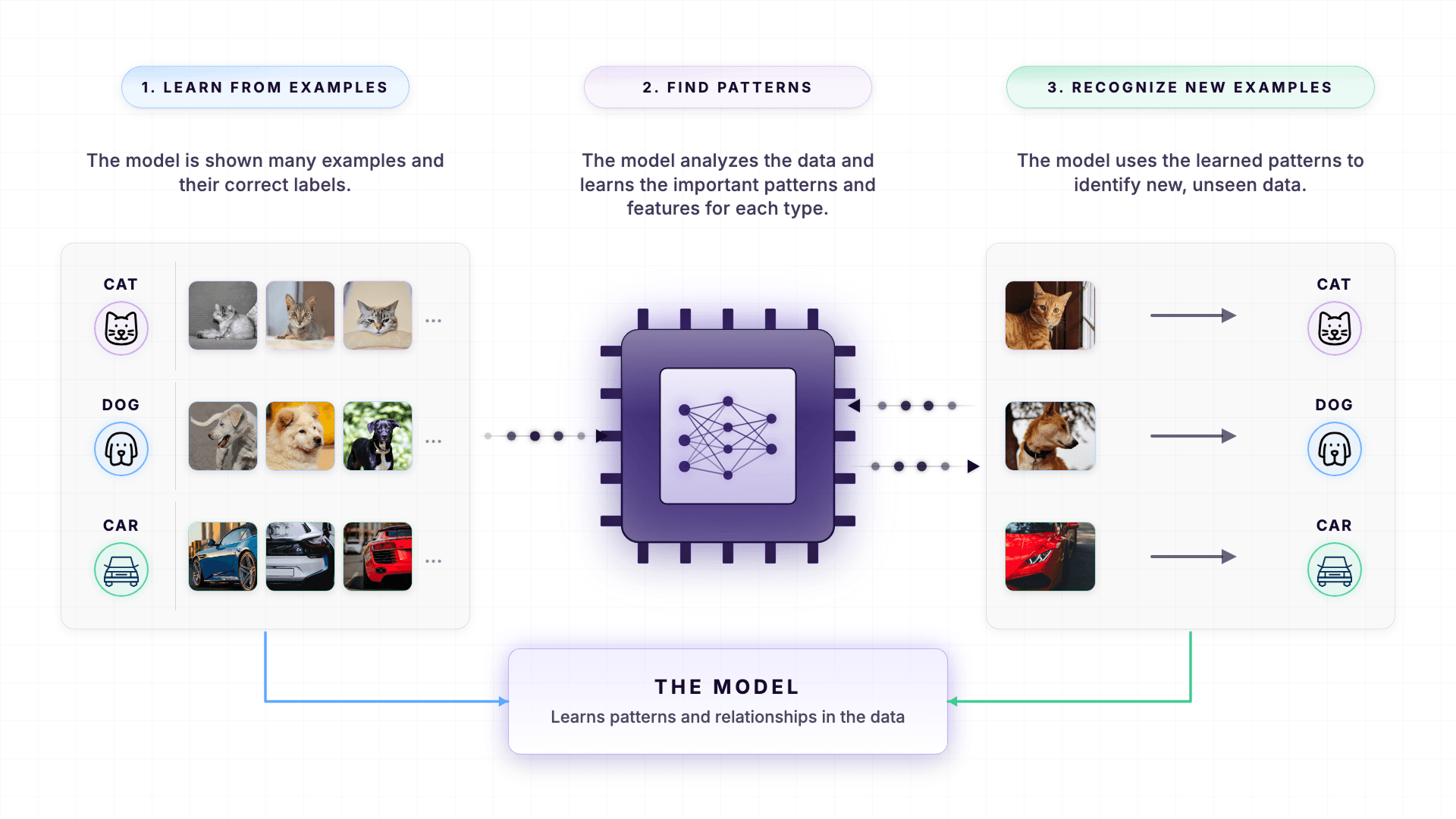

In the world of AI, a "model" is a computer program that has learned patterns from data.

The data can be text, image, voice, etc.

Patterns are everything for an LLM to work and generate human-like text that is genuinely helpful for us.

Let me elaborate.

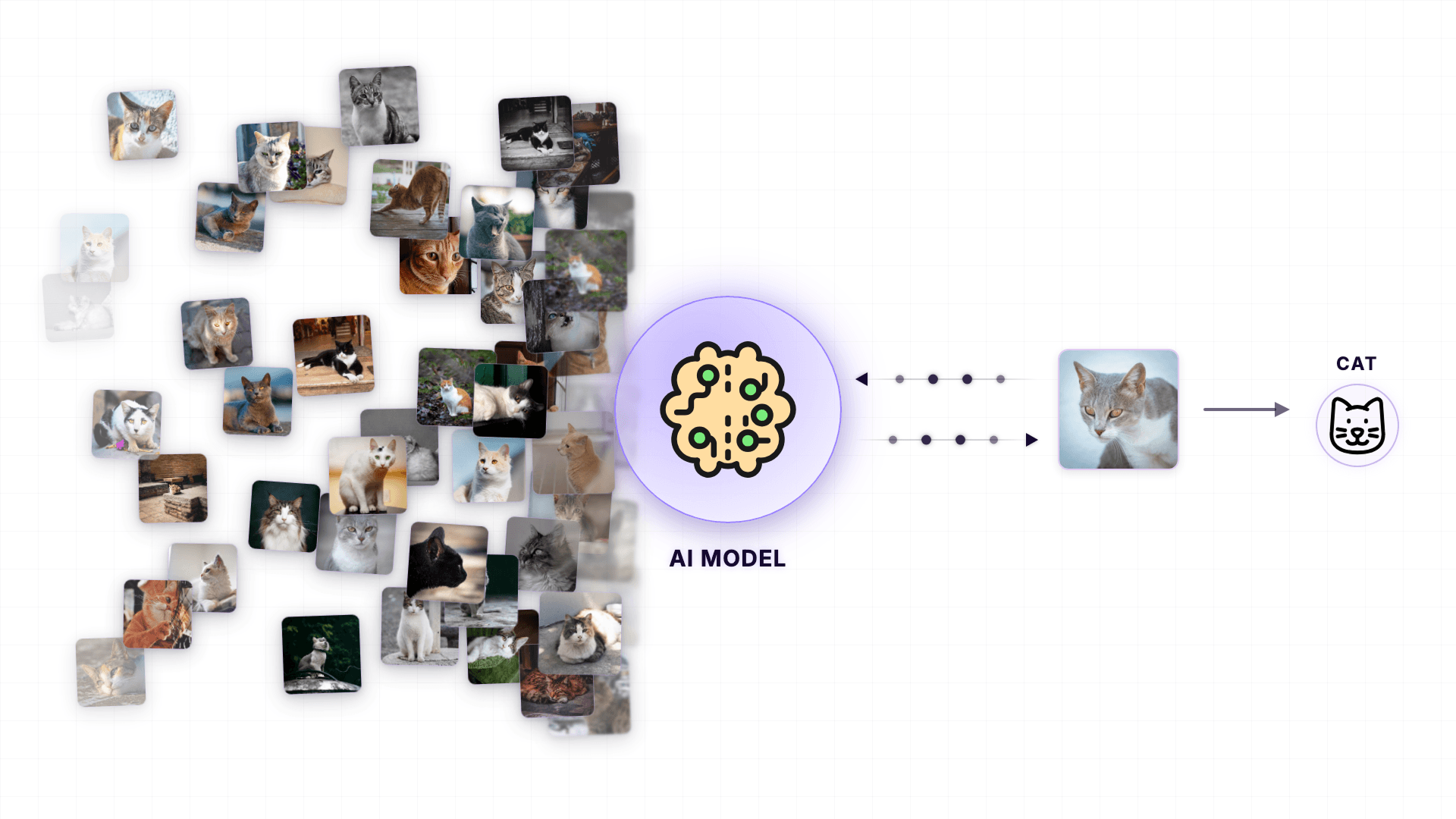

You know that an AI can recognize pictures of animals and humans, right?

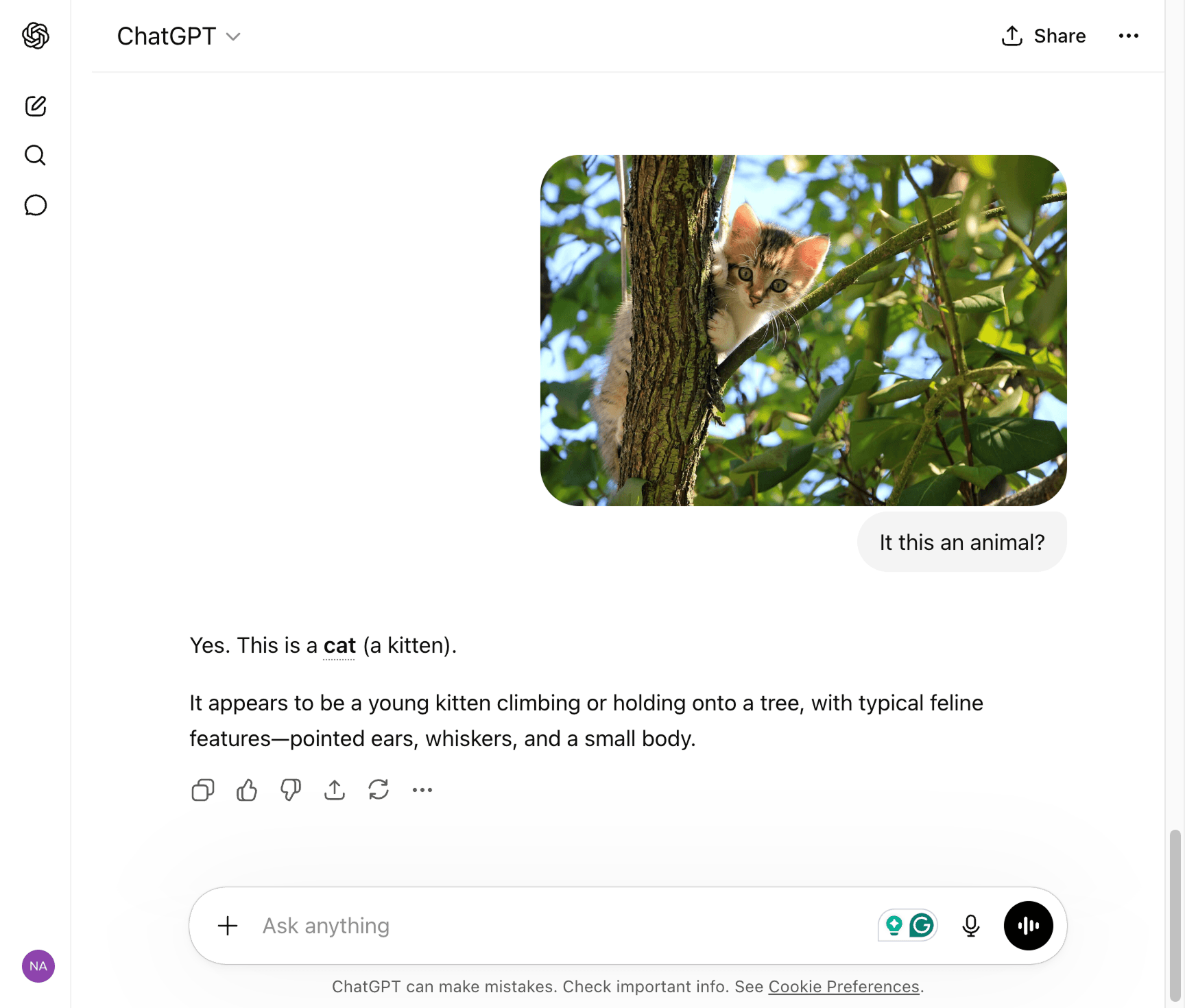

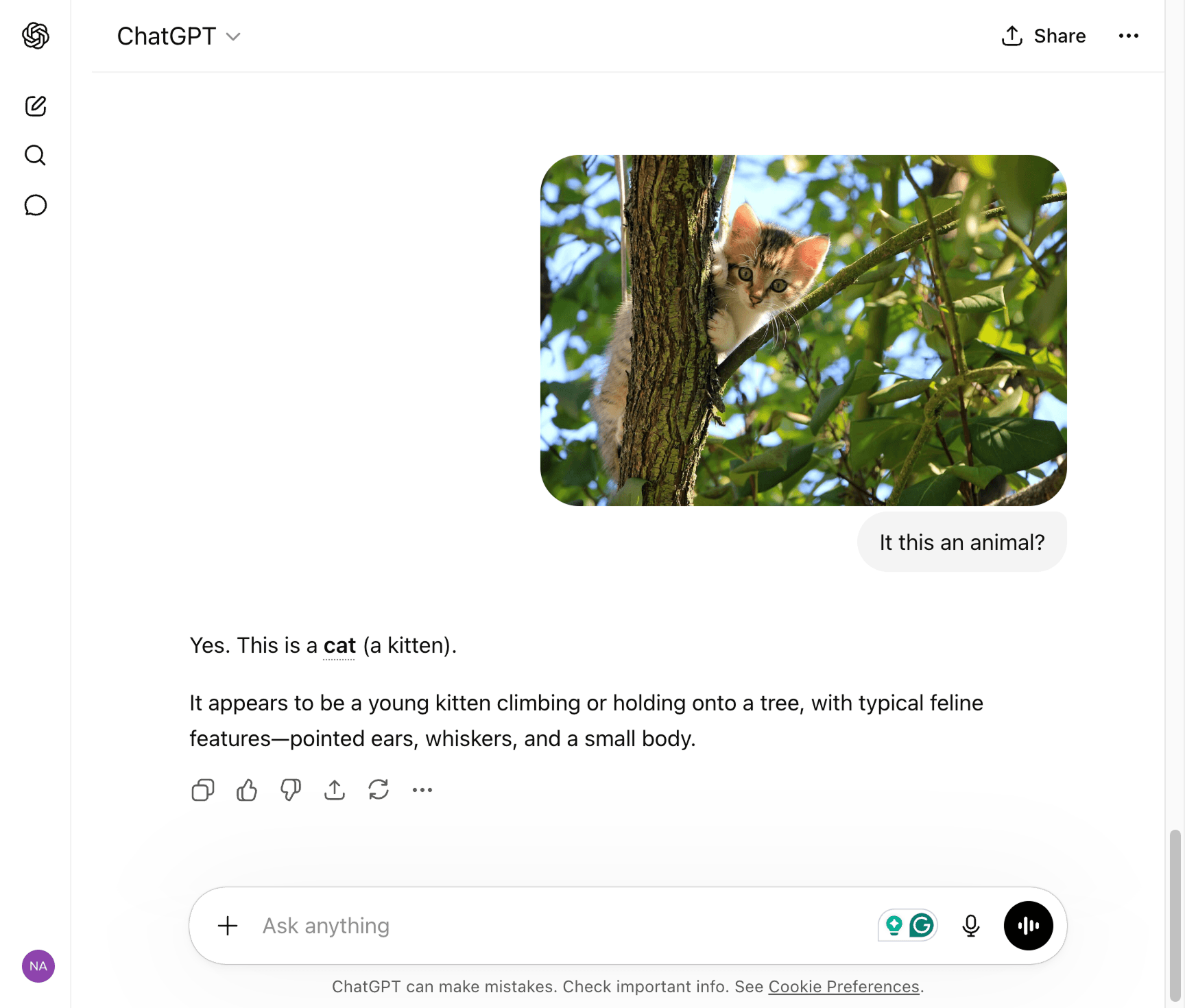

For example, I uploaded a picture of a cat (a kitten), and the AI model behind ChatGPT recognized it.

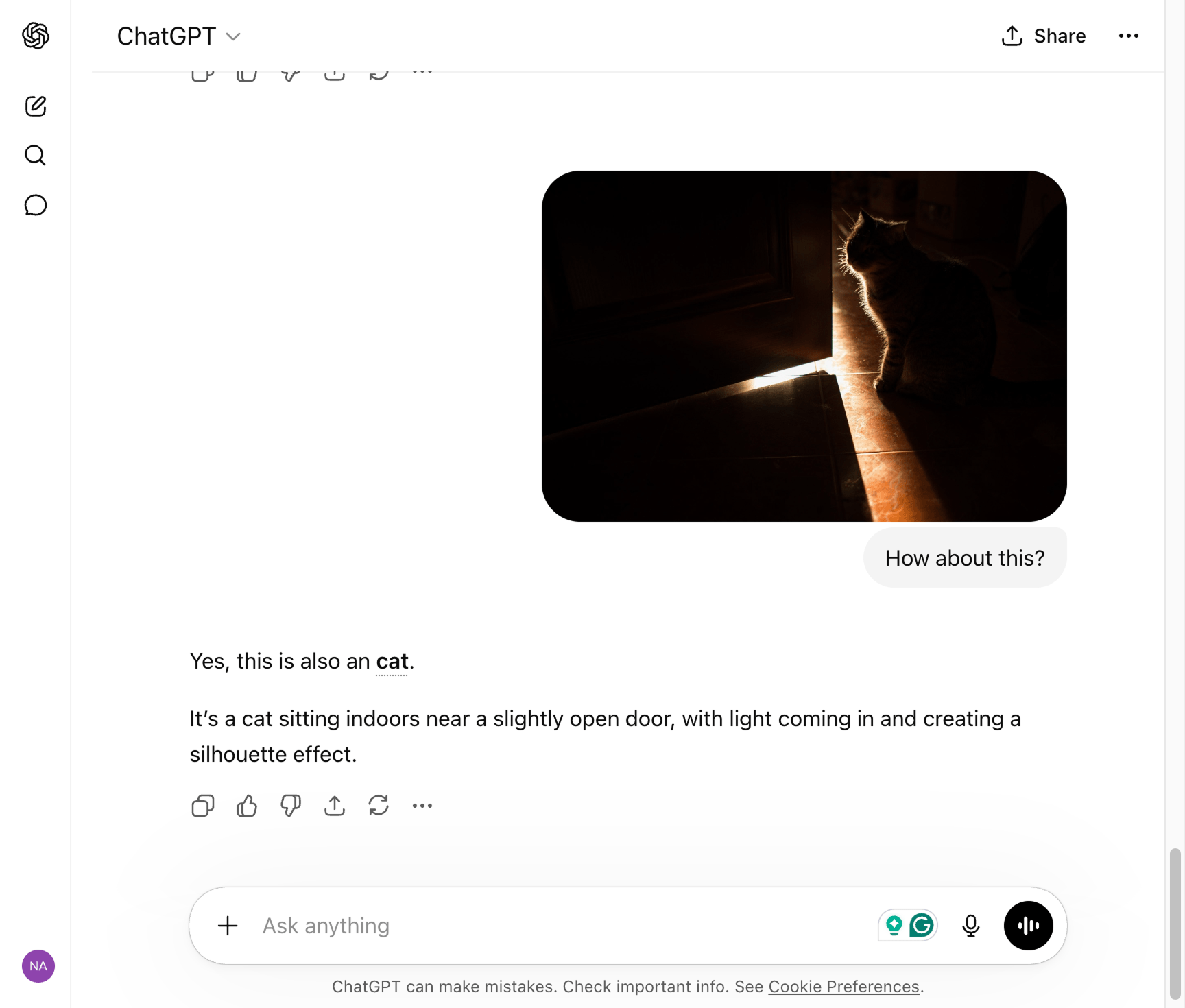

The surprising part is that the model was also able to recognize a cat in the dark, even though the cat's body or facial features are not clear:

This is possible because the AI model was fed with hundreds of thousands of cat photos to help the model learn the patterns of how a cat looks under any kind of lighting conditions and angles.

The same happens with text.

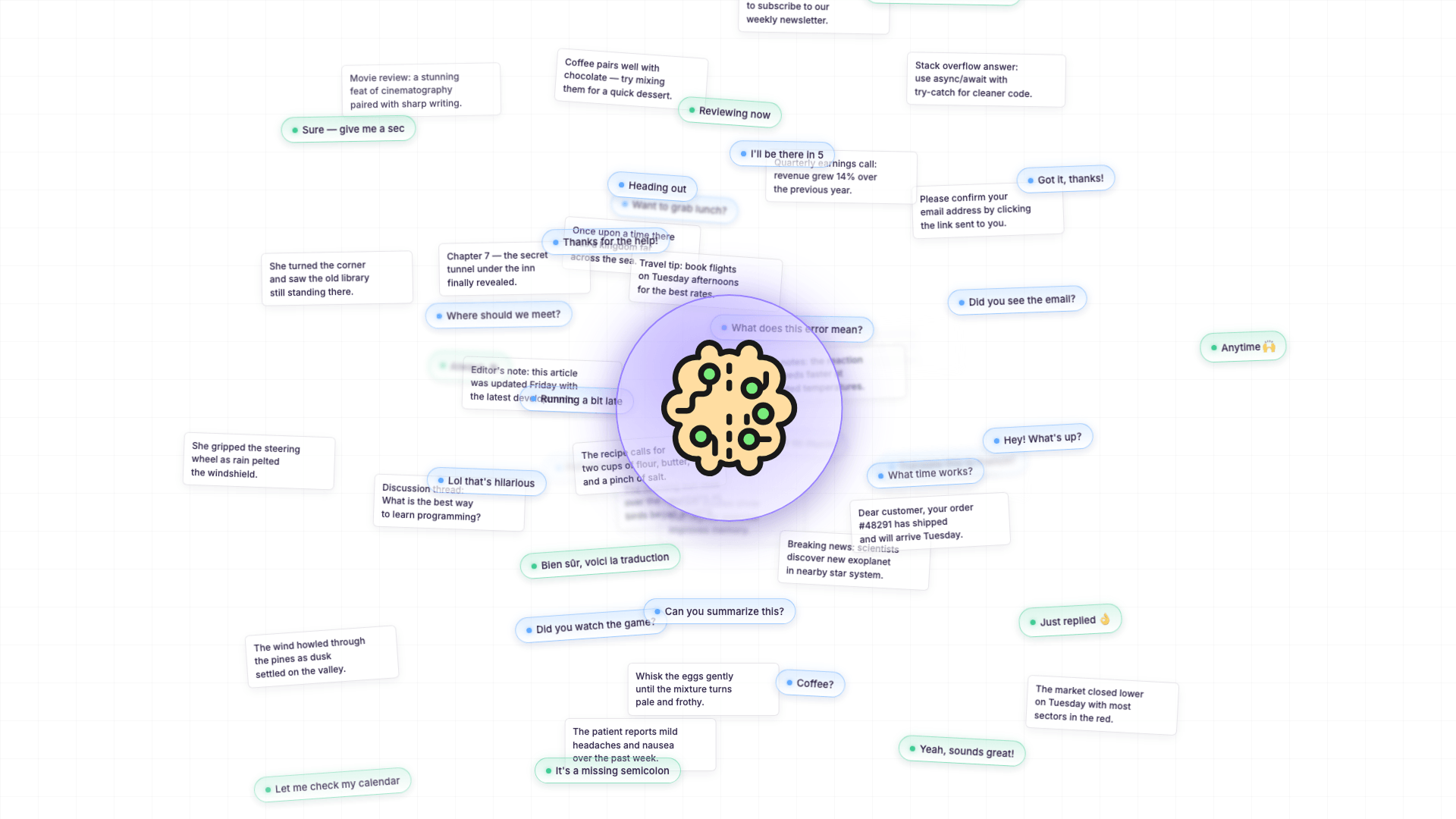

When an LLM is being trained, it is shown sentence after sentence, paragraph after paragraph, conversation after conversation - billions of them.

"Billions of text?"

Yep, there is no shortage of text available from the books, forums like Reddit, and internet articles, isn't it?

But here is the clever part.

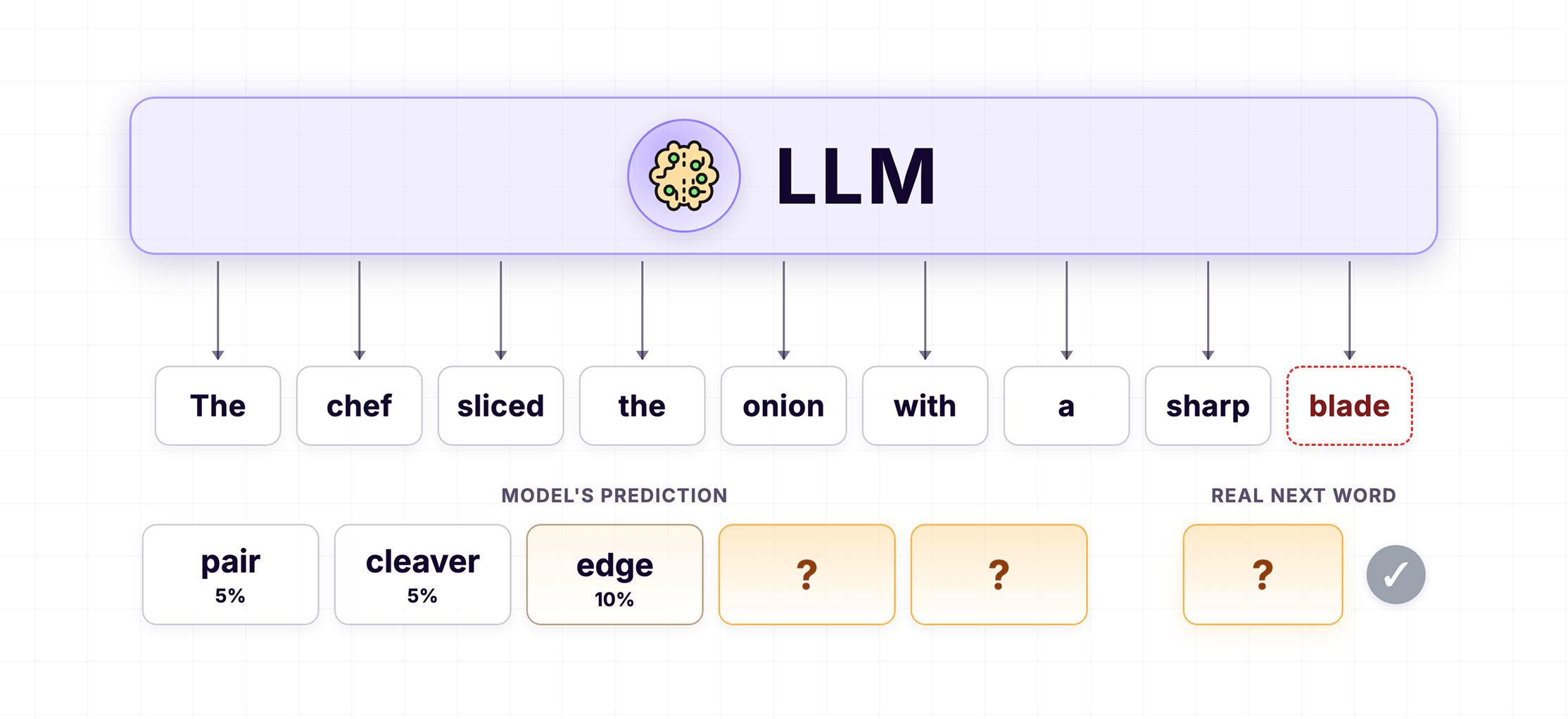

The LLM is not just "reading" these sentences. It is being trained to predict the next word in a sentence.

Let me show you what I mean.

Imagine the LLM is shown this sentence during training:

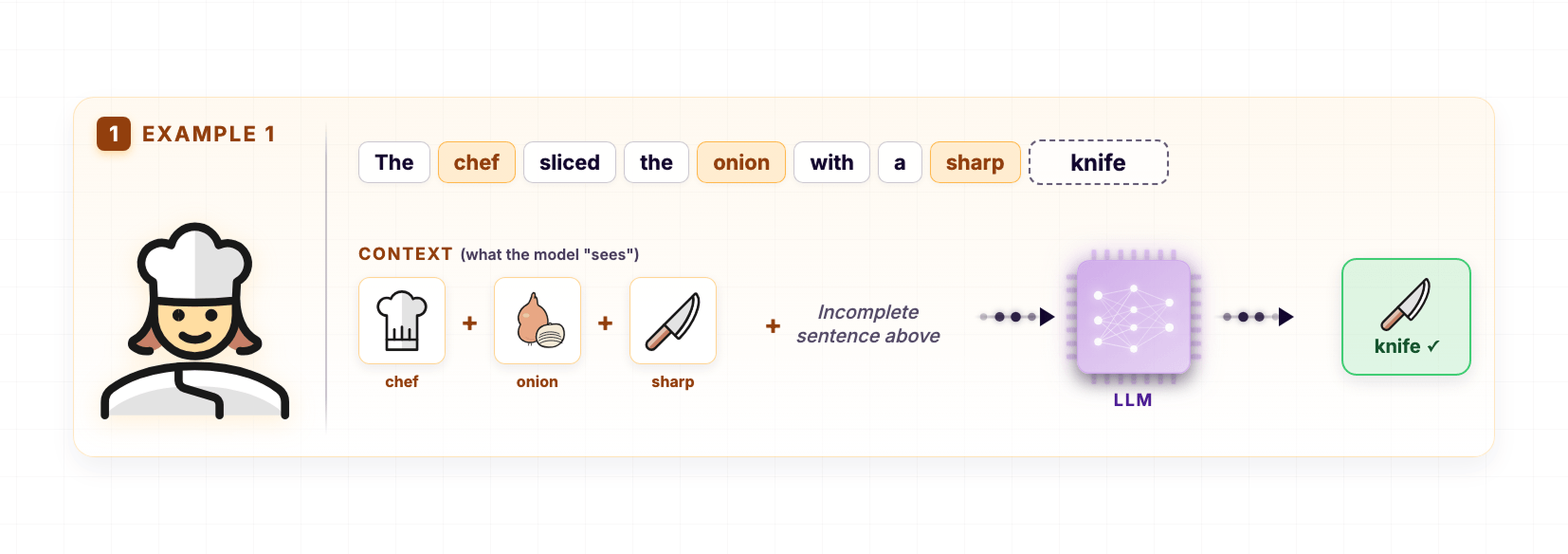

The chef sliced the onion with a sharp ___The training is set up so that the last word is hidden and the LLM is asked to guess it.

The fact is, the LLM doesn't know the answer at first.

So, it might guess "axe" or "blade" or "tool".

This is because it is still in the training process, and it is trying to recognize patterns.

Having said that, during training, every time the model guesses wrong, it gets corrected.

And every time it guesses right, that pattern gets reinforced.

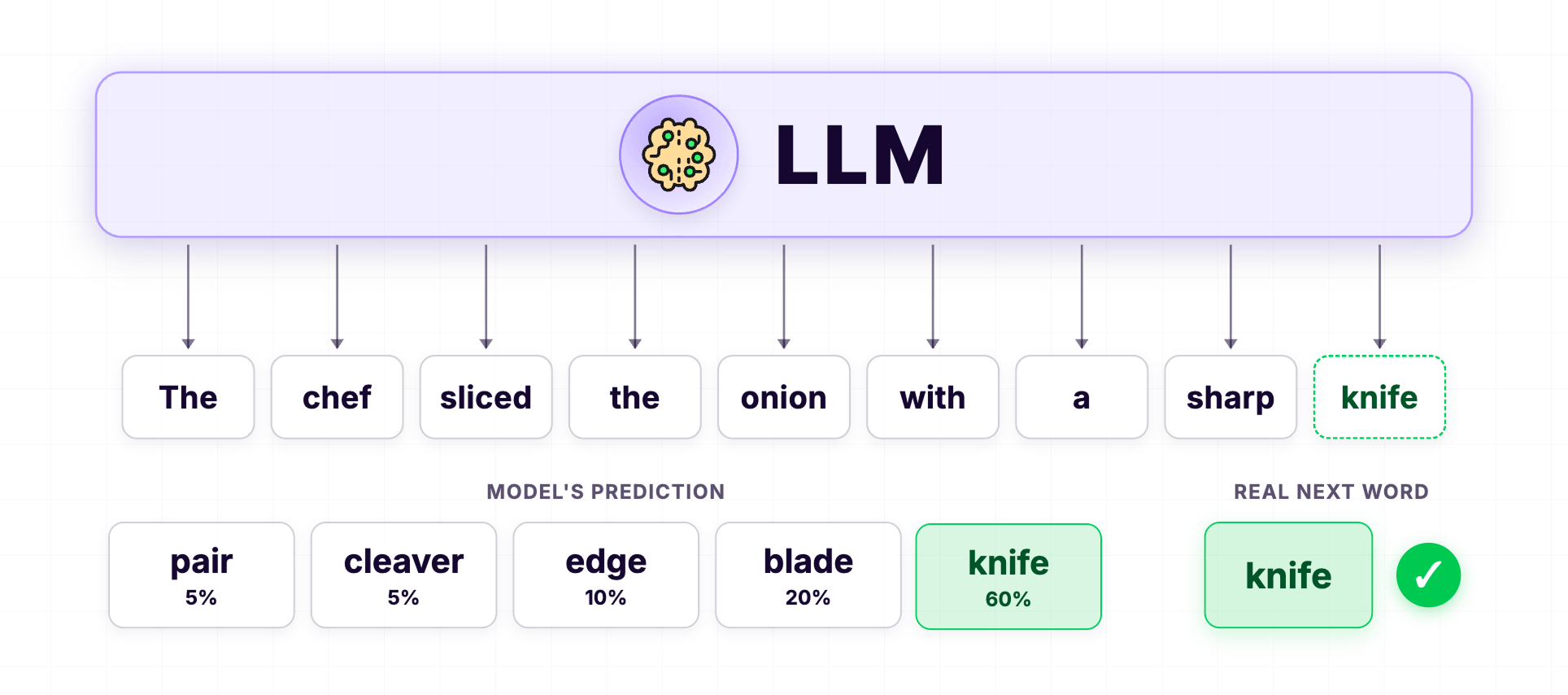

Simply put, when the LLM guesses that the answer is "Knife", it saves it as a pattern:

The chef sliced the onion with a sharp Knife.

But a single example is not enough.

A single example is just a guess. The LLM might have gotten lucky.

Imagine yourself as a kid, playing a video game for the first time.

You didn't cross the first level the moment you started playing, right?

You probably took many attempts.

And by the 10th attempt, you had figured out how to beat the level.

You didn't memorize the level.

You picked up the patterns about when to jump, when to duck, and where the traps are.

That is exactly how an LLM learns language.

The LLM goes through the same "Guess the word" exercise billions of times, across billions of different sentences.

Each time the model guesses right, the pattern gets a little stronger.

Each time it guesses wrong, it gets corrected and adjusts.

It now predicts any missing word in any sentence without hesitation.

And nobody programmed this rule into the LLM.

It figured it out on its own, by just seeing the pattern repeat across billions of sentences.

"So is the LLM just memorizing sentences?"

Nope.

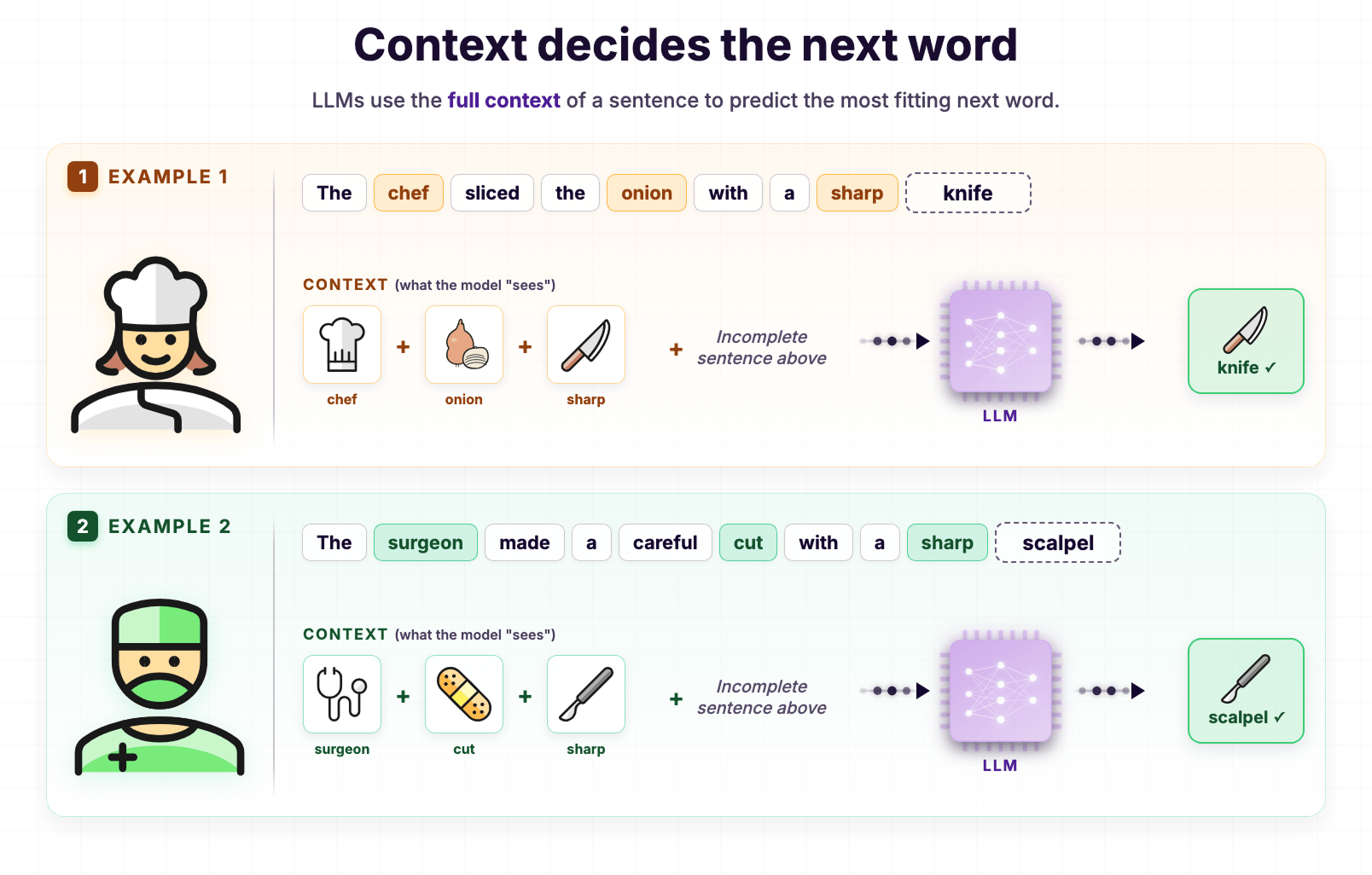

Using the patterns it learned, the LLM uses the full context of a sentence to predict the most fitting next word.

For example, here is the sentence we have been working with:

The chef sliced the onion with a sharp ___The LLM predicts 'knife' because it has seen the context chef + onion + sharp across many similar sentences during training, and 'knife' was almost always the next word.

Now look at these sentences:

The chef sliced the onion with a sharp ___

The surgeon made a careful cut with a sharp ___If you observe, both sentences end with the word "sharp."

Does this mean the LLM predicts "knife" for the surgeon sentence, too?

Nope.

The LLM predicts "scalpel."

The surgeon made a careful cut with a sharp scalpel.Why?

Because the full context is different: surgeon + cut + sharp.

And during training, the LLM saw this kind of context paired with "scalpel" almost every time.

So, just like predicting "Knife" became easier, predicting "scalpel" is now very easy for the LLM, too.

The LLM is not picking the same answer every time just because the last few words match.

It is reading the entire sentence and picking the word that fits that specific context.

And this is exactly how the LLM behind ChatGPT writes an entire article for you.

The LLM builds an entire article by predicting one word at a time, based on the patterns it learned during training.

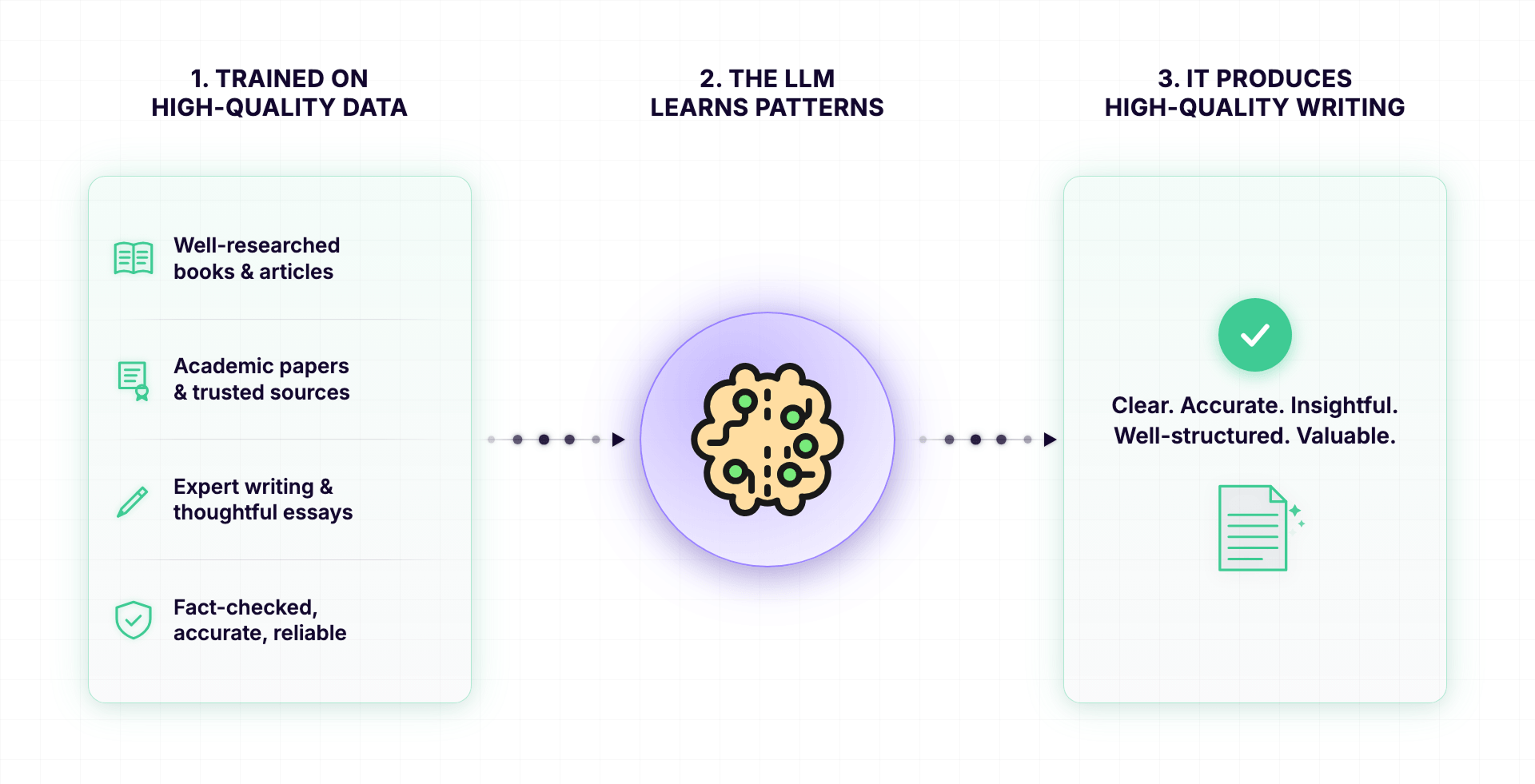

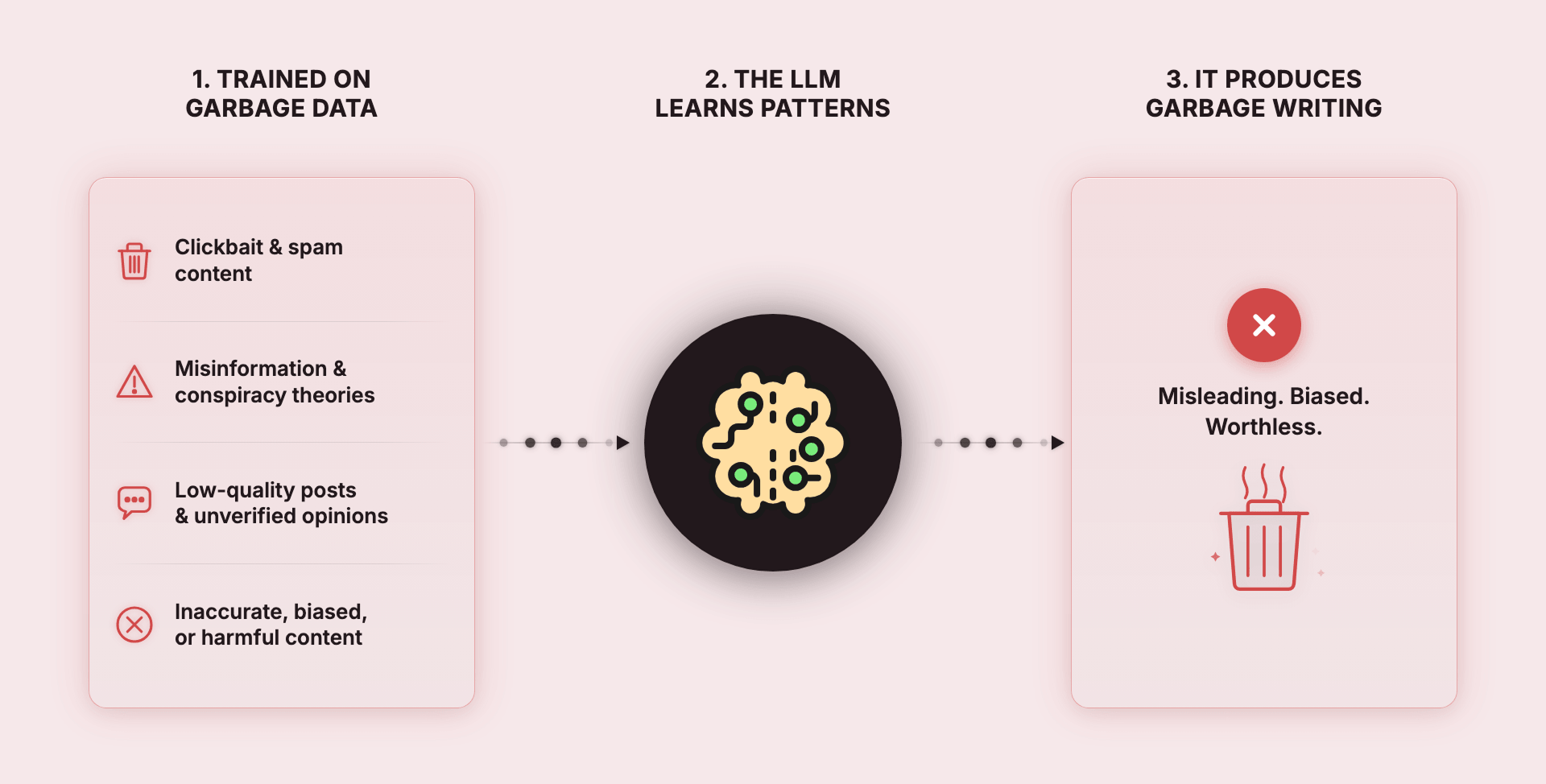

This means that everything the LLM writes is a reflection of what it was trained on.

If it was trained on high-quality writing, it produces high-quality writing.

If it was trained on garbage, it produces garbage.

Also, a model doesn't have to be about text.

AI models can be trained on all kinds of data, such as images, videos, audio, and even DNA sequences.

The model is fed enormous amounts of one specific kind of data, and it learns the patterns of that data.

Only then can it generate brand new things in the same format.

In our case, an LLM is a model trained specifically on language. That is why it can read and write so well.

Anyway, this is what the word "Model" means in "Large Language Model."

Next, let's talk about what the word "Language" means in "Large Language Model."

A "Language" model simply means that the LLM has been specifically trained on human language.

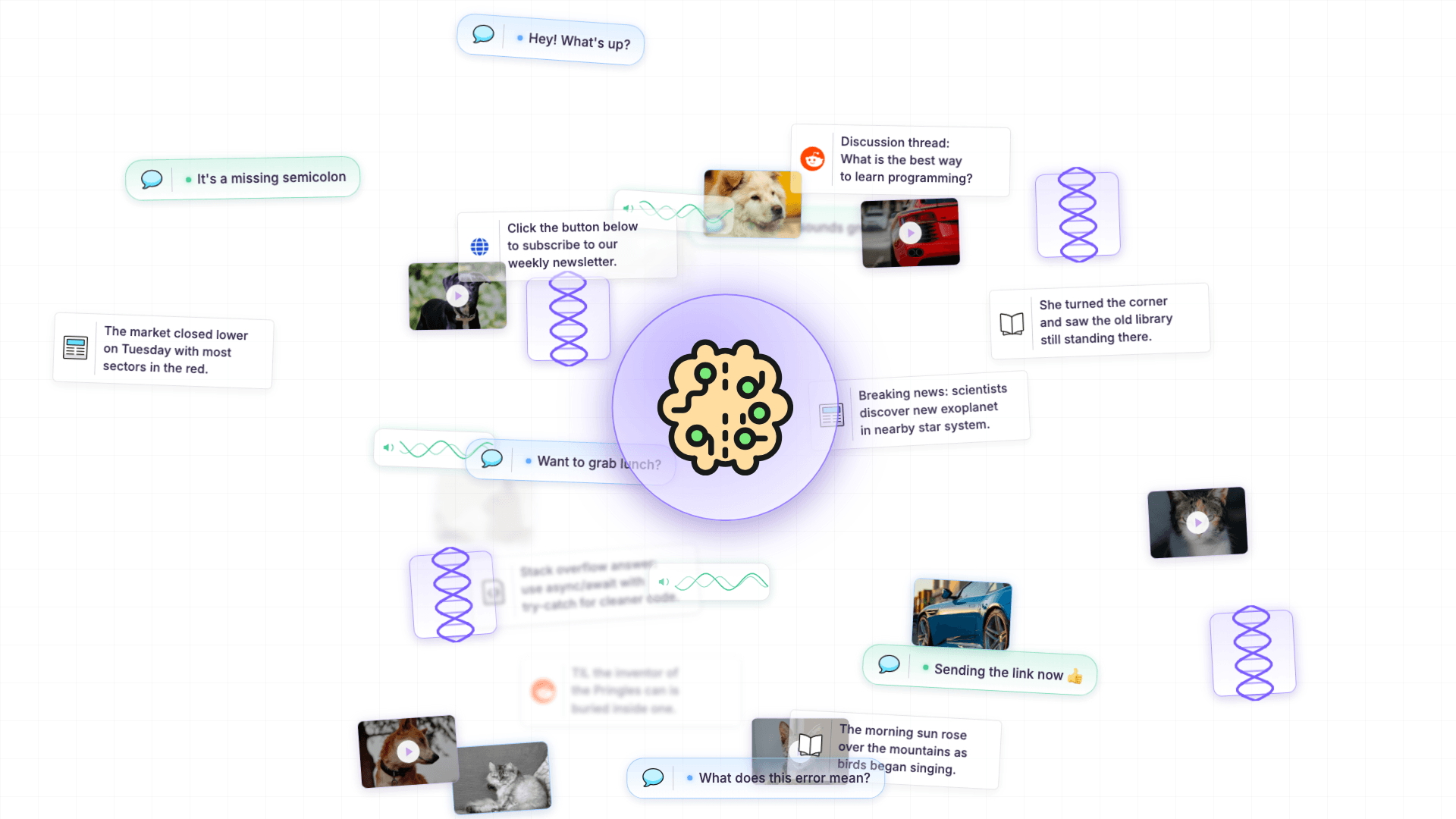

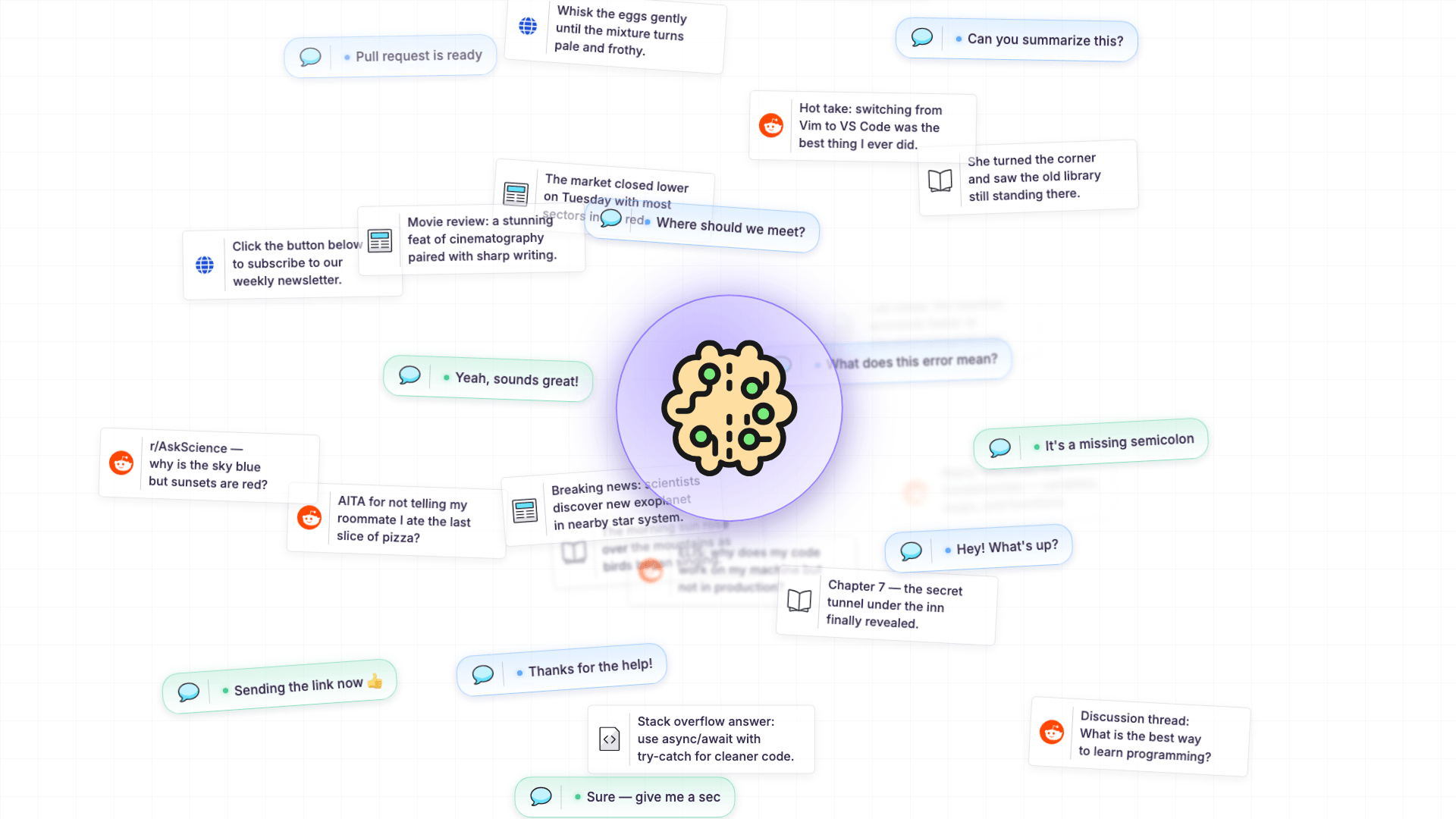

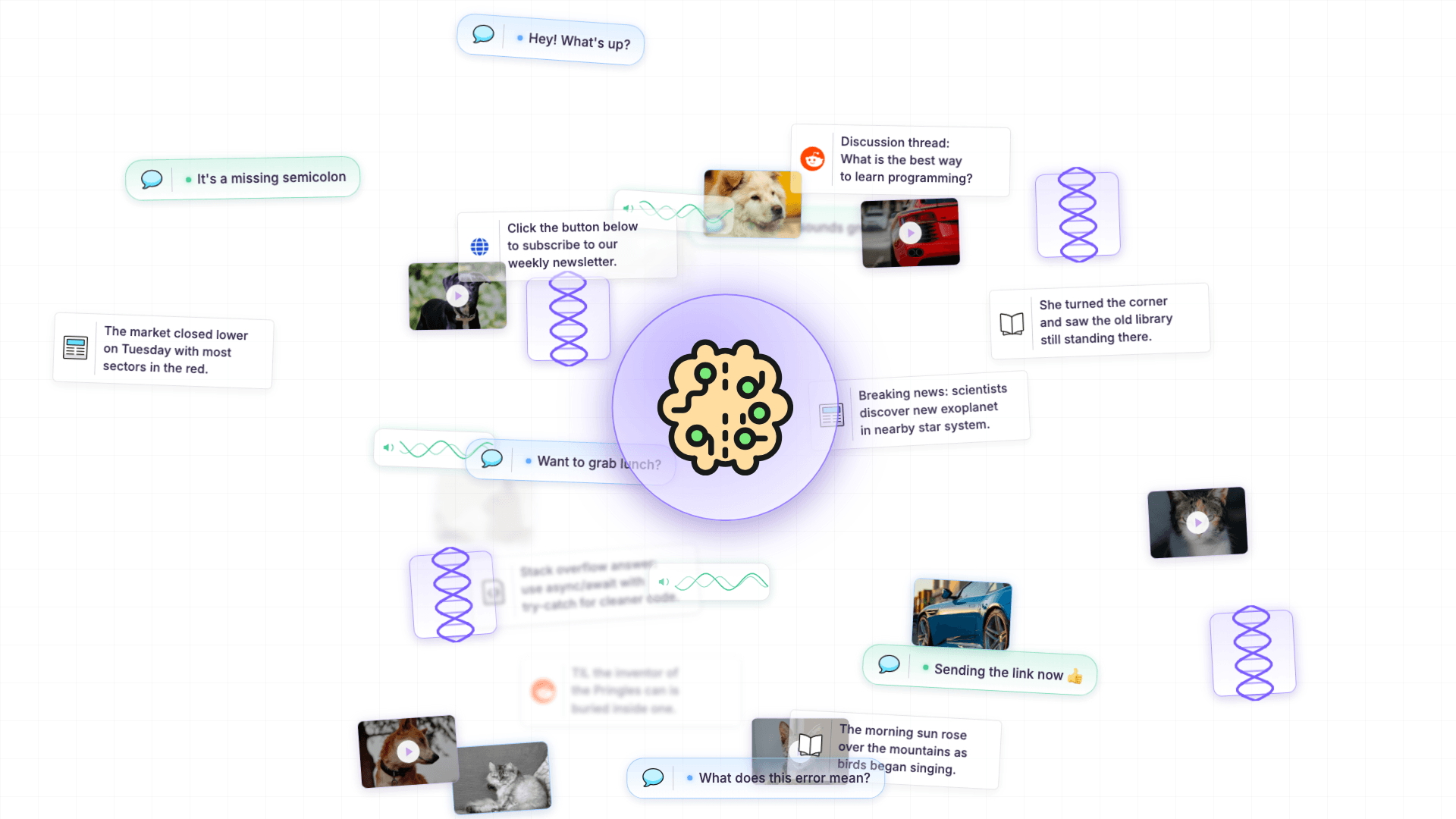

And by "human language," I mean text such as:

- Books

- Internet articles

- Website content

- Day-to-day conversations from forums like Reddit

In other words, it is the kind of language we use every day to communicate with each other.

And as I said before, the regional language doesn't matter.

The LLM was trained on text from many languages, including English, Chinese, Spanish, Mandarin, and so on.

Having said that, in 2026, the meaning of "Language" is becoming broader.

Modern LLMs are no longer limited to working with just text.

They have also evolved to work with images, voice, and video.

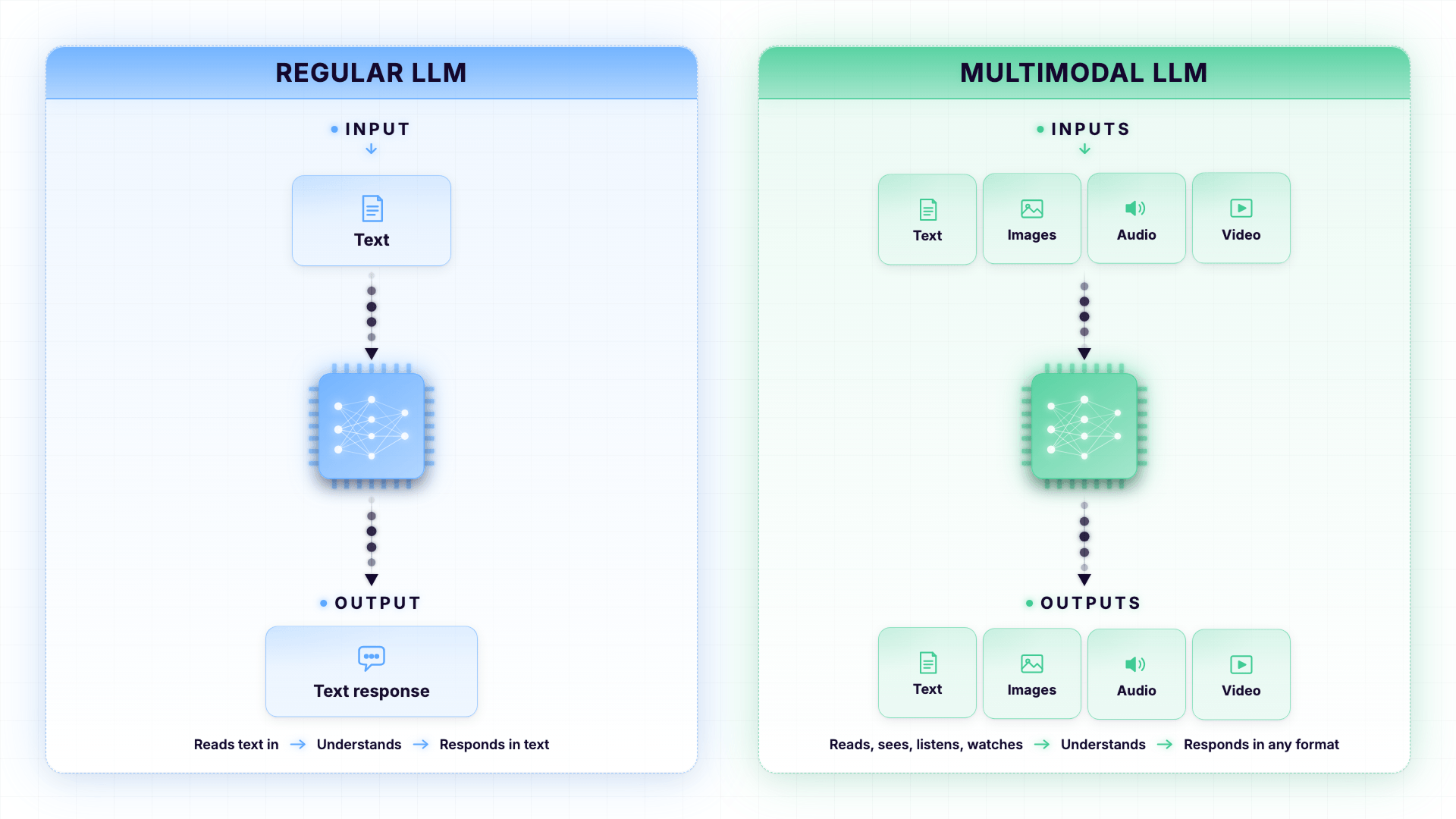

We call these modern LLMs Multimodal LLMs.

The word "modal" just means "type of media".

So multimodal = many types of media.

Think of a regular LLM as someone who can only read.

A Multimodal LLM is someone who can read, see, listen, and watch.

And honestly, you have probably already used a Multimodal LLM without realizing it.

Have you ever uploaded a photo to ChatGPT and asked, "What is in this picture?"

That is a Multimodal LLM at work.

It looked at the image and described it back to you in words.

Have you ever had a voice conversation with ChatGPT on your phone?

That is multimodal too.

The model listened to your voice, understood what you said, and replied to you out loud.

Pretty cool, right?

A few years ago, none of this was possible.

LLMs could only read and write text.

If you wanted to ask the AI about a photo, you had to describe the photo in words first. Now, you just upload it.

This is a huge step forward because most of the things we deal with every day are not just text.

We deal with photos, voice messages, screenshots, videos, PDFs, and so on.

A Multimodal LLM can handle all these different types of inputs. Not just text.

And that is all you need to know about Multimodal LLMs for now.

Next...

What makes a language model "Large"?

The word 'Large' tells you that an LLM is trained with large amounts of text.

And in the case of multimodal LLMs, the training also includes large amounts of images, voice, and video. Not just text.

If it was trained on a small amount of data, we call it a Small Language Model (SLM).

SLMs are fast, cheap, and surprisingly useful.

In fact, in 2026, most of the 'AI magic' you see in your phone and browser is actually powered by these lightning-fast small models.

But SLMs vs LLMs, when to use which, how to combine them, and the cost math behind them, is an important topic on its own.

So, we will talk about it in a dedicated lesson later in this series.

For now, let's put our focus on LLMs.

But here come a few important questions, though.

Why does the size of training data even matter?

Isn't a model that has read 10,000 books already smart enough?

And the answer to these questions is not simple. You will understand this shortly.

But size definitely matters because it defines what an LLM can and can't do.

If you train an LLM on a small number of books, let's just say, 10,000 books, it can do basic things.

For example:

- It can complete sentences

- It can answer simple questions

- It can write short paragraphs

But it struggles to perform complex tasks.

On the other hand, if you train the same model on 10 million books, it can do advanced things.

It starts to:

- Help you plan a 7-day trip from start to finish

- Help you debug code by figuring out where the error is

- Help you walk through a tough decision by weighing the pros and cons

- Help you understand complicated topics by explaining them simply

- Help you catch jokes, sarcasm, and metaphors

- Help you solve riddles

Researchers call this "emergent behavior."

Simply put, certain abilities only "emerge" once the model has been trained on enough data.

Below a certain scale, those abilities just don't exist.

Above that scale, they appear almost like magic.

You will struggle to differentiate between AI-generated and human-generated work.

"Okay! Wait. Are you saying these abilities were not directly programmed into the model?"

Yep!

That's the magical part.

No one wrote a rule that said: "if asked a riddle, here is how you should think about it".

No one programmed sarcasm detection.

No one taught the model to write poetry.

Heck, I don't understand sarcasm or poetry myself.

These abilities emerged on their own.

This is possible because the model saw enough examples of language during training to figure them out.

This is why the size of the training data matters so much in the world of AI.

The bigger the training data, the more patterns the model can recognize.

The more patterns it can recognize, the more capable it becomes.

This is also why companies like OpenAI, Anthropic, Google, and Meta keep building models with larger and larger training datasets.

They are basically trying to unlock new emergent abilities.

But here is the interesting part.

The size and quality of the training data are only half the story.

The other half is the LLM's brainpower.

The brainpower decides how much an LLM can actually absorb from all that training data.

"How do we know which LLM has more brain power than others?"

Simple. This is where an LLM's parameters come in.

Every AI model has something called parameters.

Think of parameters like the brain cells (neurons) of the LLM.

The more parameters an LLM has, the more brainpower it has to absorb training data and learn nuanced patterns.

A small LLM has fewer parameters. So, it can only handle basic things like finishing sentences and answering simple questions.

A large LLM has billions more parameters. So, it can handle nuanced things like reasoning and sarcasm.

In fact, the parameter count is so important that LLM names often include it right in the name.

For example:

- Llama 3 70B means it has 70 billion parameters

- Mistral 7B means it has 7 billion parameters

- Qwen 32B means it has 32 billion parameters

- Gemini Ultra is also estimated to be in the trillion-parameter range

You will spot these "B" labels (which stand for "billion") all over Hugging Face, GitHub, and AI documentation.

So, the next time you see a model called "Llama 3 8B" or "Qwen 32B" — you will know exactly what those numbers mean.

Roughly speaking, the parameter scale today looks like this:

- Small models typically have 1 to 8 billion parameters (like Mistral 7B, Llama 3 8B, Phi-3)

- Mid-sized models typically have 30 to 70 billion parameters (like Llama 3 70B, Qwen 32B)

- Top-tier flagship models have hundreds of billions, or even more than a trillion parameters (like GPT-4, Claude Opus, Gemini Ultra, DeepSeek V3)

That is a lot of brain cells.

So when we say "Large Language Model," we are actually talking about two things working together:

- An LLM trained on a large amount of data

- An LLM with lots of parameters (lots of brainpower)

You need both of these to get the kind of capability we expect from modern LLMs.

If an LLM has lots of parameters but is trained on tiny data, it won't have much to learn from.

If an LLM is trained on lots of data but has very few parameters, it won't have enough brainpower to truly absorb everything it has read.

You get the idea, right?

Anyway, now that you understand what makes a Large Language Model "Large," let's talk about what these models can actually do.

What can LLMs do?

LLMs can help you with a lot of variety of tasks.

And the list keeps growing as the LLMs improve day by day.

Let's talk about some of them quickly.

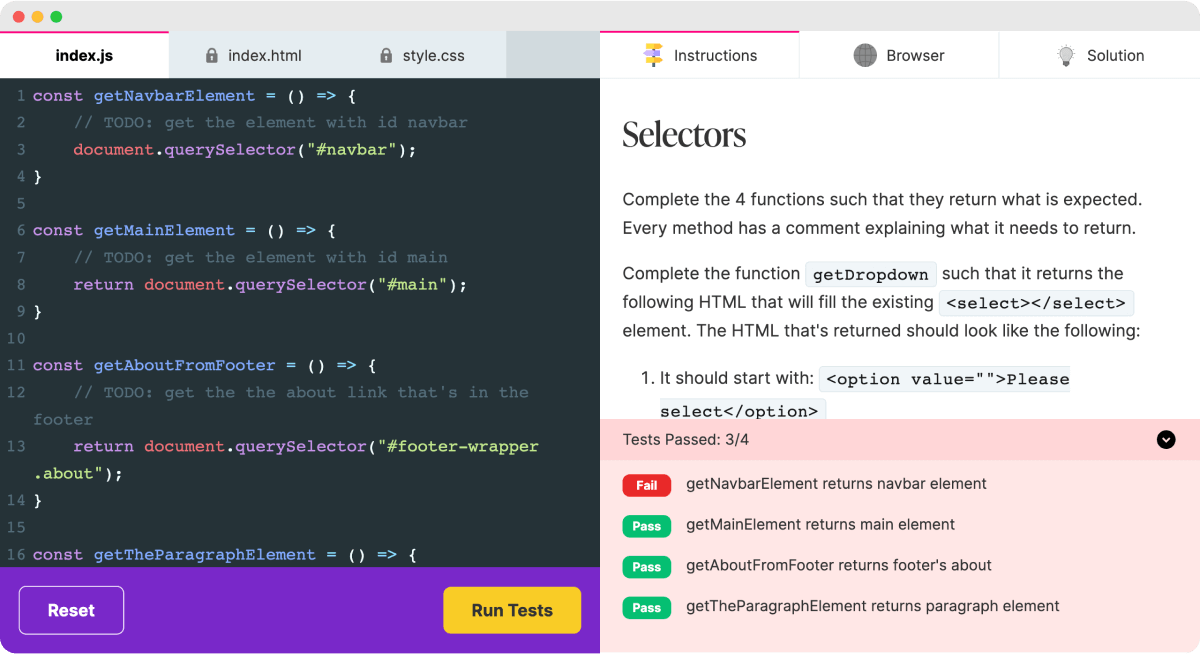

Vibe Coding

For example, you might have heard about Vibe coding.

Vibe coding is nothing but using plain English to build software applications and mobile apps.

Before LLMs became accessible to all of us, I used to write code for hours and hours using a programming language like JavaScript.

Now, I just provide instructions in English about what I want to code, and LLM writes the code for me.

For example, it took me 4 complete months to build a JavaScript code assessment tool using Svelte, a JavaScript framework.

But I asked an LLM (Claude Opus) to rebuild it from scratch using ReactJS instead of Svelte, and it took less than a day.

How powerful is that?

Not just coding, LLMs can help you with...

Writing tasks

- Drafting emails (cold outreach, follow-ups, replies)

- Writing blog articles, social media posts, and newsletters by maintaining your tone of voice

- Creating product descriptions for an online store

- Generating website content for landing pages, about us, and other usual business pages

Reading and summarizing tasks

- Translating text between languages

- Analyzing competitors' social media posts and telling you how to beat them.

- Summarizing a long PDF into digestible points that you can easily remember

- Explaining a research paper in simple language

- Extracting key points from a Zoom meeting

- Reviewing a contract and pointing out unusual clauses

Thinking and reasoning tasks

- Coming up with content marketing strategies

- Assessing and validating existing marketing plans

- Comparing two options (e.g., Mac vs Windows for video editing)

- Helping you plan a 7-day trip to Japan

- Walking you through a difficult decision

- To be honest, I have a major problem with taking a consultation from ChatGPT and hearing what I want to hear before making a bad decision 😜

Personal tasks

- Acting as a study buddy

- Helping you write a wedding speech

- Explaining a medical report in plain English (always verify with a doctor, though)

- Practicing for a job interview

And the list goes on and on...

Feeling powerful yet?

Anyway...

Here are some popular LLMs you should know about

GPT models by OpenAI.

GPT is the family of LLMs that powers ChatGPT.

The launch of ChatGPT in late 2022 is what made the whole world wake up to AI.

It is friendly, fast, and great for general use.

You can access it at chatgpt.com.

Gemini (by Google)

Gemini is Google's family of LLMs.

It is integrated into Google products like Gmail, Docs, and Search.

You can access it at gemini.google.com.

Claude (by Anthropic)

Claude is known for thoughtful, well-written responses.

It has two famous LLMs. Claude Opus and Claude Sonnet.

Opus is the advanced model.

It is especially good for programming. I personally believe it was trained for generating great code.

From personal experience, I get a lot of bugs when I try to generate code with ChatGPT and Gemini.

But Claude generates almost bug-free code for me.

Your experience could be different.

Either way, you still need to verify and test the code, though.

Also, Claude LLMs are great for writing long-form articles and careful reasoning.

Personally, I like Claude's writing style for my articles and content work.

You can access it at claude.ai.

Other LLMs worth knowing

Kimi's suite of LLMs are open-source models that are gaining attention for generating reliable code, similar to Claude Opus.

According to my experience, it is still behind Opus in terms of code reliability and frontend UI code generation, but it is getting there.

Llama (by Meta) is another great open-source family of LLMs that developers can run on their own computers.

It is helpful for analyzing and talking with private data that you can't risk uploading to ChatGPT or Claude.

Next, there is Grok (by xAI). It is available inside the X (formerly Twitter) platform.

Finally, DeepSeek and Qwen, powerful LLMs from China, are growing in popularity.

As you can see, LLMs are everywhere. They are not rare anymore.

So, if you are just starting out, pick one (ChatGPT or Claude is a great first choice) and stick with it for a few weeks.

Once you get comfortable, you can try the others and see which one feels best for you.

And that's all for this lesson.

What's coming next in this series

Now that we understand what a Large Language Model is, in the next lesson, we will dig into tokens and the context window.

Understanding them will tell us why LLMs cost what they cost and why long conversations sometimes seem to forget things.

So, if you have not already, please subscribe to my newsletter for upcoming updates.

I will see you in the next lesson. Bye!